As digital transformation accelerates across industries, the architecture of data centers is rapidly evolving. Two dominant models—edge data centers and hyperscale data centers—are shaping how data is processed, stored, and delivered. While both play critical roles in modern IT infrastructure, they serve fundamentally different purposes and require distinct design approaches, especially in terms of power, cooling, and scalability.

Understanding the differences between edge and hyperscale data centers is essential for enterprises, cloud providers, and infrastructure planners who are designing future-ready systems.

What Are Hyperscale Data Centers?

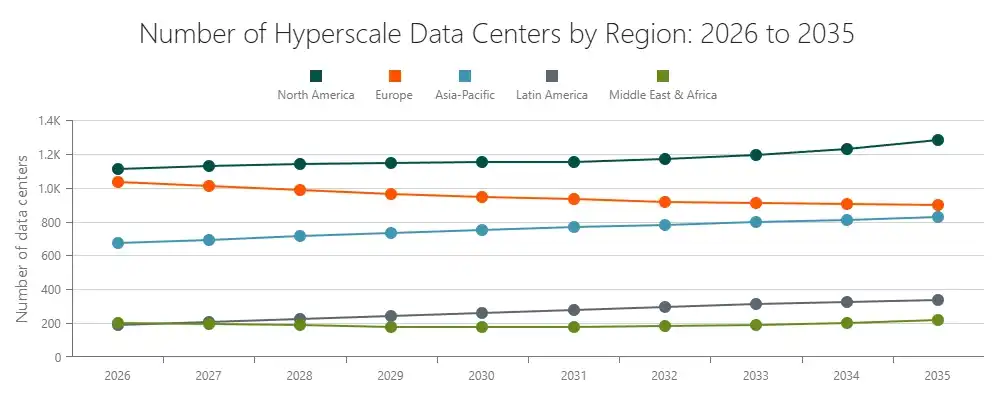

Hyperscale data centers are large-scale facilities designed to support massive workloads, typically operated by cloud service providers, internet giants, and large enterprises. These data centers are built to handle enormous volumes of data processing and storage, often supporting millions of users simultaneously across global platforms.

The defining characteristic of hyperscale data centers is their ability to scale rapidly and efficiently. They are usually located in strategic regions where land, power availability, and network connectivity can support large infrastructure expansion. Hyperscale facilities often consist of thousands of servers, high-density racks, and highly optimized power and cooling systems designed for efficiency at scale. Their architecture focuses on centralized processing, where data from various locations is aggregated and processed in one core facility.

What Are Edge Data Centers?

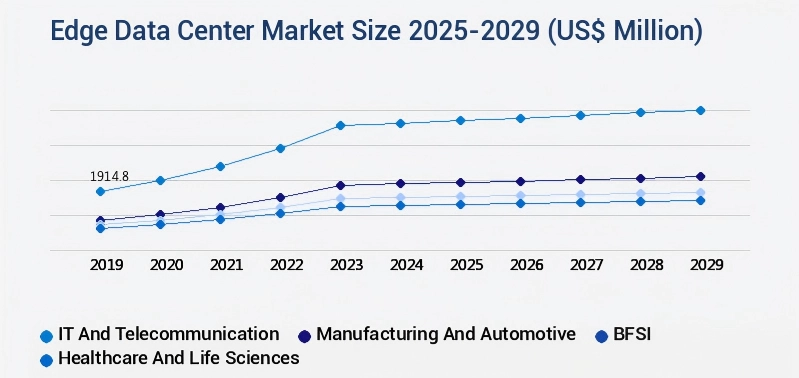

Edge data centers are smaller, distributed facilities located closer to end users or data sources. Their primary purpose is to reduce latency and improve real-time data processing by bringing computing resources closer to where data is generated.

Unlike hyperscale data centers, which prioritize centralized efficiency, edge data centers are designed for proximity and speed. They are commonly deployed in urban areas, near telecom networks, or within industrial environments where real-time processing is critical. Edge data centers support applications such as IoT, autonomous systems, smart cities, and content delivery networks, where milliseconds of delay can significantly impact performance.

Core Differences Between Edge and Hyperscale Data Centers

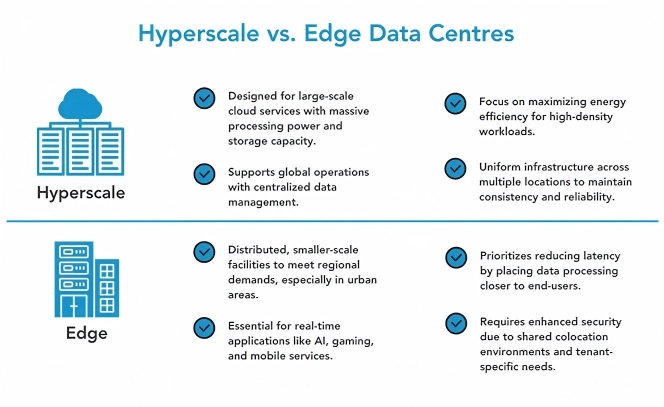

The fundamental difference between edge and hyperscale data centers lies in their architecture and operational objectives. Hyperscale data centers focus on centralized computing and large-scale efficiency, while edge data centers prioritize low latency and localized processing.

In hyperscale environments, workloads are aggregated into massive facilities that benefit from economies of scale, enabling lower cost per unit of computing power. However, this centralized model introduces latency when data must travel long distances. In contrast, edge data centers distribute workloads across multiple smaller sites, significantly reducing latency but often at the expense of higher per-unit infrastructure costs.

Another key distinction is scalability. Hyperscale data centers are designed for horizontal expansion, allowing operators to add thousands of servers within a single campus. Edge data centers, on the other hand, scale by increasing the number of locations rather than expanding a single facility. This creates a decentralized network of computing nodes that collectively deliver performance closer to users.

Infrastructure Requirements: Power, Cooling, and Space

The infrastructure requirements for edge and hyperscale data centers differ significantly due to their scale and deployment environments. Hyperscale data centers require extremely high-capacity power systems, often supported by large-scale substations, centralized UPS systems, and backup generators. Their design emphasizes efficiency, with advanced cooling technologies such as liquid cooling or optimized airflow systems to manage high-density loads.

Edge data centers, in contrast, operate in space-constrained environments where flexibility and compact design are critical. Power infrastructure in edge facilities must be highly reliable but also modular and easy to deploy. Unlike hyperscale facilities that can rely on large centralized systems, edge data centers often benefit from Solutions UPS modulaires that can be quickly installed, scaled, and maintained without downtime.

Cooling strategies also differ. While hyperscale data centers can deploy complex cooling systems at scale, edge facilities require compact, efficient cooling solutions such as in-row cooling or integrated precision air conditioning systems to maintain stable operating conditions in smaller footprints.

Latency, Performance, and Application Scenarios

Latency is one of the most critical factors distinguishing edge from hyperscale data centers. Hyperscale facilities are optimized for bulk processing and large-scale data storage, making them ideal for applications such as cloud computing, big data analytics, and enterprise workloads that do not require real-time response.

Edge data centers, however, are designed to handle latency-sensitive applications. By processing data closer to the source, edge computing significantly reduces response times, enabling real-time applications such as autonomous driving, industrial automation, augmented reality, and smart infrastructure systems.

In many modern architectures, edge and hyperscale data centers are not competing models but complementary components. Data is often processed at the edge for immediate response and then transmitted to hyperscale facilities for deeper analysis, storage, and long-term processing.

Scalability and Deployment Strategy

Scalability in hyperscale data centers is achieved through large-scale expansion within a single location. Operators can continuously add servers, racks, and power capacity within a centralized campus, benefiting from standardized design and operational efficiency.

In contrast, edge data centers scale through geographic distribution. As demand grows, new edge nodes are deployed closer to users or devices, creating a network of interconnected facilities. This distributed model requires infrastructure solutions that are easy to replicate, deploy quickly, and operate with minimal on-site intervention.

This is where modular infrastructure becomes particularly important. Modular UPS systems, prefabricated data center units, and standardized cooling solutions enable faster deployment and consistent performance across multiple edge locations.

Power Architecture Considerations in Edge vs Hyperscale

Power architecture is a critical differentiator between edge and hyperscale data centers. Hyperscale facilities often use centralized UPS systems combined with high-capacity backup generators to support large, continuous loads. Their focus is on efficiency and cost optimization at scale.

Edge data centers require a different approach. Due to their distributed nature and limited on-site resources, they demand highly reliable, compact, and scalable power solutions. Modular UPS systems are especially well-suited for edge deployments because they allow capacity to be added incrementally and support maintenance without service interruption. This flexibility ensures that edge facilities can adapt to changing load requirements without significant infrastructure changes.

Additionally, edge environments often face less predictable power conditions, making power quality and redundancy even more critical. A well-designed UPS system ensures stable operation even in challenging environments.

Choosing the Right Architecture for Your Business

The choice between edge and hyperscale data centers depends on the specific requirements of the application, including latency sensitivity, scalability needs, and geographic distribution. Businesses that rely on real-time processing and low latency will benefit from edge deployments, while those requiring large-scale data processing and storage will continue to rely on hyperscale infrastructure.

In many cases, the optimal strategy is a hybrid approach that combines both models. By leveraging edge data centers for real-time processing and hyperscale facilities for centralized workloads, organizations can achieve both performance and efficiency.

Conclusion: Convergence of Edge and Hyperscale Data Centers

Edge and hyperscale data centers represent two essential pillars of modern digital infrastructure. Rather than replacing one another, they are increasingly integrated into unified architectures that balance performance, scalability, and efficiency.

As this convergence continues, the demand for flexible, scalable, and reliable infrastructure solutions will grow. Power systems, in particular, must adapt to support both centralized hyperscale environments and distributed edge deployments. Modular UPS solutions are emerging as a key enabler in this transition, providing the flexibility and reliability required to support next-generation data center architectures.