The Structural Shift in Power Demand Driven by AI Workloads

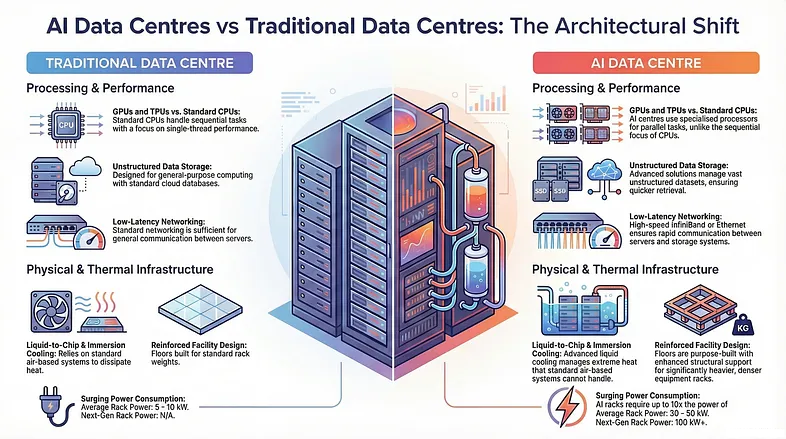

AI data centers are fundamentally redefining how electrical infrastructure is designed, deployed, and operated. Unlike traditional enterprise or cloud environments, which typically run predictable and moderately fluctuating workloads, AI computing introduces sustained high-density processing that pushes power systems to their operational limits. GPU clusters used for model training often run at near-constant utilization, creating a continuous high-load environment rather than the intermittent demand patterns seen in conventional workloads.

This shift is not only about higher total power consumption but also about how that power is consumed. AI workloads tend to scale horizontally across large clusters, meaning that power demand increases in synchronized bursts across hundreds or thousands of nodes simultaneously. This synchronized behavior creates stress conditions for upstream power infrastructure, particularly UPS systems, which must now handle both sustained load and sudden load convergence without degradation in output stability.

Why Traditional UPS Architectures Are No Longer Sufficient

Conventional UPS systems were designed around relatively stable IT environments where load growth was gradual and predictable. These systems typically prioritized basic backup functionality, short-term ride-through capability, and stable output during utility failure. However, AI data centers introduce conditions that expose the limitations of these legacy designs.

One of the key challenges is power density. AI racks can easily exceed 50 kW per cabinet, with next-generation deployments pushing even higher thresholds. This level of density creates concentrated thermal and electrical stress that traditional centralized UPS architectures struggle to accommodate efficiently. Additionally, AI workloads do not follow smooth load curves; instead, they exhibit rapid transitions between different computation phases, requiring UPS systems to respond instantly without efficiency loss or voltage instability.

As a result, UPS systems are no longer evaluated solely on backup duration or redundancy levels. Their ability to operate efficiently under high-density, dynamic load conditions has become equally important.

The Rise of Modular UPS as a Scalable Infrastructure Model

To meet the evolving demands of centres de données d'IA, modular UPS architecture has emerged as the dominant design approach. Unlike traditional monolithic systems, modular UPS platforms allow power capacity to be expanded incrementally through hot-swappable modules. This aligns naturally with the phased deployment model of AI infrastructure, where compute clusters are continuously scaled based on demand.

In practical terms, systèmes UPS modulaires provide both operational flexibility and capital efficiency. Data center operators no longer need to over-provision power infrastructure at the initial stage of deployment. Instead, they can scale power capacity in parallel with GPU cluster expansion, ensuring that electrical infrastructure grows in sync with compute demand.

Another critical advantage of modular systems is redundancy optimization. By distributing load across multiple independent power modules, these systems reduce the risk of single-point failure while maintaining high availability standards required by AI training workloads.

Efficiency Optimization Under Continuous High Load Conditions

Energy efficiency has become a central concern in AI data center design, and UPS systems play a significant role in determining overall Power Usage Effectiveness (PUE). Unlike traditional workloads that fluctuate throughout the day, AI clusters often operate at sustained high utilization, which places UPS systems in a continuous high-load operating state.

Under these conditions, even small efficiency losses become significant at scale. A difference of one or two percentage points in UPS efficiency can translate into substantial energy waste when multiplied across megawatt-scale facilities. This has driven the adoption of high-efficiency online UPS systems capable of maintaining near-peak performance across a wide load range.

In addition to efficiency under load, part-load performance has also become increasingly important. AI data centers frequently experience variable utilization during model training cycles, checkpointing phases, or workload scheduling changes. UPS systems must therefore maintain stable efficiency curves even when operating below maximum capacity.

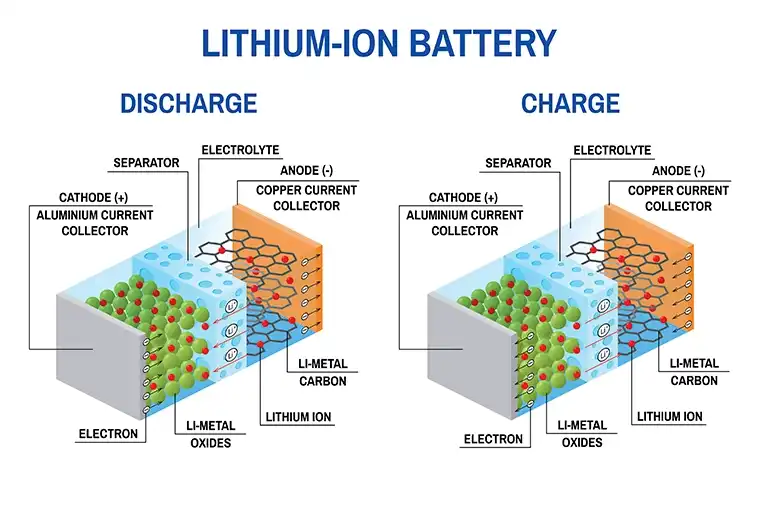

Battery Technology Transition: From Lead-Acid to Lithium-Ion Systems

Battery technology is undergoing a parallel transformation in response to AI-driven infrastructure demands. Traditional lead-acid batteries, while still widely used in legacy systems, are increasingly being replaced by lithium-ion solutions in modern AI data centers.

The primary advantage of lithium-ion batteries lies in their higher energy density and faster charge-discharge cycles. In environments where rapid recovery after power disturbances is critical, lithium-ion systems provide a significant operational advantage. They also require less physical space, which is particularly valuable in high-density AI facilities where real estate within power rooms is limited.

Furthermore, lithium-ion integration aligns more effectively with modular UPS designs, enabling tighter system integration and improved lifecycle management through advanced battery monitoring systems.

Digitalization of UPS Systems and Intelligent Power Management

Modern UPS systems are no longer isolated electrical devices; they are becoming fully integrated digital components within data center infrastructure ecosystems. Through advanced monitoring and control interfaces, UPS units now provide real-time visibility into power consumption, load distribution, and system health across entire facilities.

This digital transformation enables predictive maintenance capabilities, where potential failures can be identified before they impact operations. In AI data centers, where downtime can result in significant computational and financial loss, this predictive capability is becoming increasingly critical.

In more advanced implementations, UPS systems are also integrated into broader data center infrastructure management (DCIM) platforms, allowing coordinated optimization of power, cooling, and compute resources.

Thermal Constraints and the Convergence of Power and Cooling Systems

As AI workloads continue to increase in density, thermal management has become tightly coupled with power infrastructure design. UPS systems must now operate within environments that are increasingly dominated by liquid cooling and high-efficiency thermal distribution systems.

This convergence of power and cooling infrastructure requires UPS designs that are not only electrically efficient but also physically adaptable to compact, high-density environments. Reduced footprint, improved thermal resilience, and compatibility with advanced cooling systems are becoming essential design criteria.

Grid Interaction and the Role of UPS in Energy Stability

At the macro level, AI data centers are becoming significant energy consumers that can influence local grid stability. Large-scale AI training clusters draw power at levels comparable to industrial facilities, creating new challenges for energy distribution networks.

UPS systems are increasingly expected to play an active role in stabilizing power input, mitigating fluctuations, and supporting integration with on-site energy storage or renewable energy systems. In some cases, UPS infrastructure is evolving into hybrid energy management systems capable of participating in demand response programs and grid balancing strategies.

Conclusion: UPS as a Core Enabler of AI Infrastructure

The evolution of AI data centers is fundamentally reshaping the role of UPS systems. What was once a passive backup technology is now becoming a dynamic, intelligent, and scalable component of critical infrastructure.

From modular scalability and lithium-ion integration to digital monitoring and grid interaction, UPS systems are undergoing a comprehensive transformation driven by the demands of AI computing. As AI workloads continue to expand in scale and complexity, the importance of advanced UPS architecture will only increase, positioning it as a foundational element in the next generation of global data center infrastructure.