The Transformation of Data Centers in the AI Era

Artificial Intelligence is fundamentally reshaping how data centers are designed and operated. Unlike traditional IT workloads, AI applications—especially large-scale training and inference—require extremely high computational density powered by GPUs and specialized accelerators.

The rapid adoption of platforms built around technologies from NVIDIA is pushing rack densities far beyond traditional limits, often exceeding 50kW per rack. This shift is not incremental—it represents a structural change in infrastructure design. As a result, data center cooling and power systems must evolve from independent subsystems into a tightly integrated architecture capable of handling extreme and dynamic loads.

Rising Power Demand in AI Data Centers

The exponential growth of AI workloads has made power infrastructure one of the most critical components in modern data centers. Traditional racks operating at 5–10kW are no longer representative of current demand, as AI clusters routinely reach 30kW to 80kW per rack.

To support this level of consumption, high-efficiency modular UPS systems have become essential. These systems ensure continuous and clean power through true online double conversion while maintaining efficiency levels approaching 97%. In parallel, modular power distribution architectures are being adopted to allow flexible scaling without downtime.

Compared to conventional infrastructure approaches commonly seen in solutions from providers like Vertiv, modular integrated power systems offer greater adaptability for rapidly evolving AI environments. Additionally, lithium-ion battery technologies are increasingly preferred due to their higher energy density, longer lifecycle, and reduced footprint, making them ideal for high-performance applications.

Cooling Challenges Driven by High-Density Computing

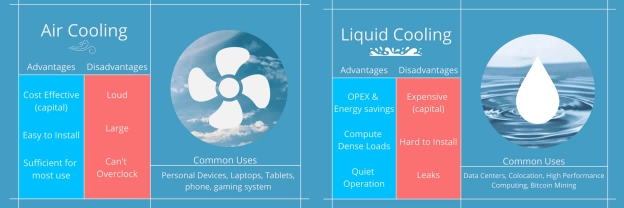

As power density increases, thermal management becomes a primary constraint. Traditional air cooling systems begin to lose effectiveness beyond 20kW per rack, which is significantly below the requirements of most AI deployments.

This limitation leads to uneven temperature distribution, hotspots, and increased risk of hardware failure. At the same time, cooling systems account for a substantial portion of total energy consumption—often between 30% and 50%—which directly impacts operational expenditure.

These challenges highlight the need for more advanced cooling strategies that can efficiently handle high heat loads while maintaining energy efficiency.

Advanced Cooling Technologies for AI Workloads

To address the thermal challenges of AI infrastructure, liquid cooling technologies are rapidly gaining traction. Direct-to-chip liquid cooling enables precise heat removal from CPUs and GPUs, significantly improving efficiency and supporting ultra-high-density deployments.

Immersion cooling represents another advanced approach, where servers are submerged in dielectric fluids to achieve exceptional heat dissipation with minimal energy loss. This method is particularly suitable for hyperscale AI environments where performance and efficiency are critical.

Hybrid solutions such as rear door heat exchangers also provide a practical pathway for upgrading existing facilities. These systems capture heat at the rack level before it spreads into the data hall, improving overall cooling efficiency without requiring a complete redesign.

In AI-focused deployments aligned with architectures from companies, the trend is increasingly moving toward integrated thermal management systems that combine multiple cooling methods to optimize performance.

Integration of Cooling and Power Systems

One of the most significant shifts in AI data center design is the convergence of cooling and power systems. Rather than operating independently, these systems must now function as a coordinated ecosystem.

AI workloads are highly dynamic, with rapid fluctuations in power consumption during training and inference processes. This requires cooling systems to respond in real time, ensuring thermal stability under varying conditions.

To achieve this level of coordination, operators are deploying intelligent monitoring platforms such as DCIM systems, combined with automation and AI-driven optimization tools. These technologies enable predictive maintenance, real-time load balancing, and continuous efficiency improvements, ultimately enhancing system reliability and reducing operational risk.

Modular Data Centers for AI Deployment

Modular and prefabricated data centers are emerging as a preferred solution for AI infrastructure due to their scalability and speed of deployment. These systems integrate power and cooling components into a unified architecture, allowing organizations to deploy high-performance environments in a fraction of the time required for traditional construction.

Factory pre-integration and testing ensure consistent quality, while modular designs allow for incremental expansion as demand grows. This makes them particularly suitable for edge computing scenarios and regions with limited infrastructure, where rapid deployment and flexibility are essential.

For businesses seeking to deploy AI capabilities quickly, modular data centers provide a practical and efficient alternative to conventional builds.

Energy Efficiency and Sustainability Considerations

As energy consumption continues to rise, improving efficiency and reducing environmental impact have become critical priorities for data center operators. High-efficiency UPS systems, optimized airflow management, and renewable energy integration are key strategies in achieving these goals.

Free cooling technologies are also widely adopted to reduce reliance on mechanical systems, particularly in suitable climates. Power Usage Effectiveness (PUE) remains a key performance metric, with advanced AI data centers targeting values below 1.3.

By combining efficient power infrastructure with advanced cooling technologies, organizations can significantly reduce both operational costs and carbon footprint.

Future Trends in AI Data Center Cooling and Power

The future of AI data centers will be defined by increasing automation, higher density, and deeper system integration. AI-driven infrastructure management will enable predictive analytics, autonomous optimization, and real-time adjustments across both power and cooling systems.

At the same time, rack densities are expected to exceed 100kW, pushing the limits of current technologies and accelerating the transition toward liquid cooling as a standard solution. Traditional air cooling will gradually become less viable in high-performance environments, marking a fundamental shift in data center design philosophy.

Organizations that proactively adopt these technologies will be better positioned to handle the growing demands of AI workloads.

Заключение

AI is redefining the foundation of data center infrastructure, placing unprecedented demands on both cooling and power systems. Traditional approaches are no longer sufficient to support high-density, high-performance environments.

To remain competitive, organizations must adopt integrated, scalable, and energy-efficient solutions that are specifically designed for AI workloads. By aligning infrastructure strategies with emerging technologies, data centers can achieve greater reliability, improved efficiency, and long-term sustainability.

Looking to build or upgrade your AI data center?

ГОТОВАЯ СИЛА provides end-to-end power and cooling solutions, including modular UPS systems, precision cooling technologies, and fully integrated modular data centers. Contact us today to design a high-efficiency, AI-ready infrastructure tailored to your business needs.