As data centers grow denser and more power-hungry, conventional HVAC systems are no longer sufficient. Precision Air Conditioning (PAC) has become the backbone of modern thermal management—delivering targeted, reliable cooling exactly where compute equipment needs it most.

جدول المحتويات

1. What Is Precision Air Conditioning (PAC)?

Precision Air Conditioning (PAC), also referred to as Computer Room Air Conditioning (CRAC) or Computer Room Air Handler (CRAH), is a specialized cooling system engineered specifically for IT environments. Unlike general-purpose commercial HVAC, PAC units are designed to maintain exact temperature and humidity conditions within the tight tolerances that servers, storage systems, and networking hardware require.

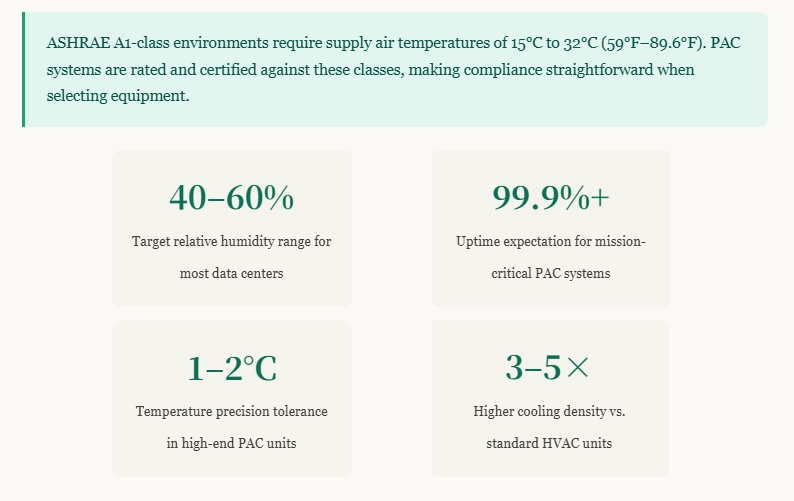

The term “precision” is deliberate: data center equipment typically demands a supply air temperature between 18°C and 27°C (64°F–80°F) with relative humidity maintained at 40%–60%, as specified by ASHRAE Thermal Guidelines for Data Processing Environments. PAC systems are purpose-built to hold those parameters around the clock, 365 days a year, with near-zero tolerance for drift.

2. How PAC Systems Work in Data Centers

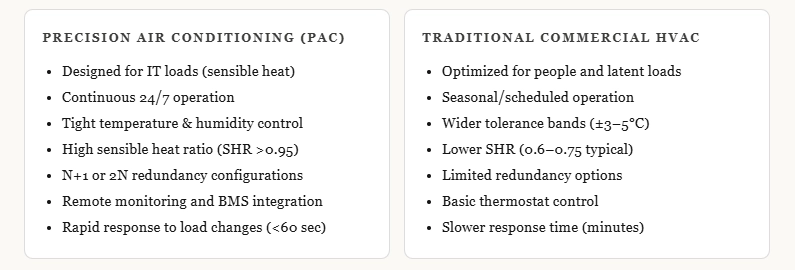

PAC units operate on the same refrigeration cycle as conventional air conditioners—a compressor circulates refrigerant through an evaporator coil that absorbs heat from the room air, then expels that heat through a condenser. What distinguishes PAC is the control architecture layered on top of this cycle.

Modern PAC units incorporate variable-speed fans, electronic expansion valves (EEVs), and microprocessor-based controllers that continuously sample inlet and outlet temperatures, humidity, and airflow. When a rack starts pulling more power during peak compute loads, the controller detects the rising inlet temperature and adjusts fan speed, refrigerant flow, and—in units with integrated humidification—moisture injection within seconds.

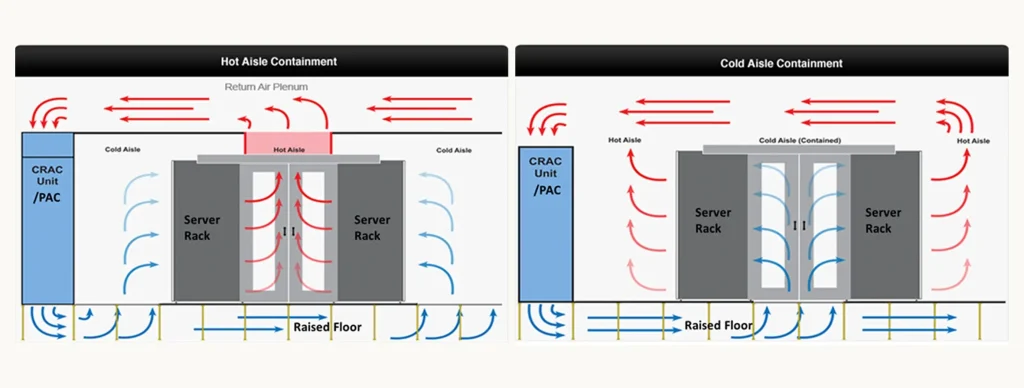

In a typical raised-floor data center, PAC units sit at the perimeter or between rows, drawing warm return air from the hot aisle, conditioning it, and delivering cool supply air into the underfloor plenum. Cold aisle containment (CAC) or hot aisle containment (HAC) systems work in tandem with PAC units to prevent hot and cold air from mixing, dramatically improving efficiency.

3. Types of PAC Units

Computer Room Air Conditioner (CRAC)

CRAC units use a self-contained compressor and refrigerant circuit. They are highly reliable because they are self-sufficient—no external chiller is required. CRACs are common in small-to-medium data centers and edge deployments where installing a chilled water plant would be impractical or cost-prohibitive.

Computer Room Air Handler (CRAH)

CRAHs use chilled water supplied by a central plant rather than an on-board compressor. The chilled water coil inside the CRAH unit does the heavy lifting, making CRAHs highly energy-efficient at scale. They are the dominant choice in large hyperscale data centers where centralized chiller plants can achieve economies of scale and, increasingly, free cooling through water-side economizers.

In-Row Cooling (IRC)

IRC units are installed directly between server racks, shortening the air path from supply to server inlet to just a few centimeters. This targeted approach is ideal for high-density rows exceeding 10–20 kW per rack, where traditional room-level PAC cannot deliver cool air fast enough before it picks up heat from adjacent equipment.

Rear-Door Heat Exchangers (RDHx)

RDHx units mount on the rear of a server rack and use chilled water to cool the exhaust air before it enters the room. Because heat is captured at the source, RDHx systems can support rack densities of 30 kW and above—a critical capability for GPU-intensive AI and HPC workloads.

4. PAC vs. Traditional HVAC: Key Differences

The sensible heat ratio (SHR) difference is especially important. Server rooms generate almost exclusively sensible (dry) heat—no moisture. Traditional HVAC wastes energy dehumidifying air that was never humid; PAC units are tuned to handle sensible loads efficiently, delivering more cooling capacity per kilowatt of electrical input.

5. Critical Specifications and Performance Metrics

When evaluating PAC systems, facility engineers examine several key metrics:

Cooling Capacity (kW): Ranges from ~10 kW for small in-row units to 300 kW or more for large CRAH units. Capacity must be matched to the IT load with appropriate redundancy overhead—typically 25–50% over baseline.

Energy Efficiency Ratio (EER) / Coefficient of Performance (COP): Higher COP means more cooling output per unit of energy consumed. Modern high-efficiency CRAH units can achieve COP values of 5–8 when combined with free cooling, versus 2–3 for older CRAC designs.

Power Usage Effectiveness (PUE): While PUE is a facility-level metric, PAC efficiency directly drives it. Hyperscale operators target PUE below 1.2; best-in-class facilities reach 1.1 or lower. Precision cooling accounts for roughly 30–40% of total data center energy consumption.

Airflow Rate (CFM/m³/h): Must match rack density and containment strategy. Undersized airflow leads to recirculation and hot spots; oversized airflow wastes energy on fan power.

6. Benefits of PAC in Modern Data Centers

The primary benefit of PAC is reliability. A temperature excursion of even a few degrees above thermal design limits can trigger server throttling, unexpected shutdowns, and, in extreme cases, permanent hardware damage. PAC systems are designed to maintain their performance envelope even during partial equipment failures, thanks to N+1 or 2N redundancy.

Beyond reliability, PAC enables higher rack densities. Legacy perimeter cooling maxes out at 5–8 kW per rack in practice. Row-based and rack-level PAC approaches allow operators to colocate high-density GPU clusters, storage arrays, and networking spine switches in the same physical footprint, reducing real estate and interconnect latency.

PAC systems also integrate natively with Building Management Systems (BMS) and Data Center Infrastructure Management (DCIM) platforms, enabling real-time thermal mapping, predictive maintenance alerts, and automated load balancing across cooling units.

7. Challenges and Limitations

Despite its advantages, PAC comes with meaningful capital and operational costs. High-quality CRAH units and the chilled water infrastructure they require represent a significant upfront investment. Maintenance—including refrigerant checks, coil cleaning, and filter replacement—must be scheduled carefully to avoid disrupting operations.

Humidity management is another ongoing challenge. Over-humidification risks condensation on cold surfaces and corrosion; under-humidification creates electrostatic discharge (ESD) risks that can damage sensitive electronics. PAC units with integrated humidifiers and dehumidifiers mitigate this, but add complexity.

As rack densities push beyond 50–100 kW—driven by large-scale AI training clusters—even advanced air-based PAC approaches are beginning to reach their physical limits. Liquid cooling (direct liquid cooling, immersion cooling) is emerging as a complementary or replacement technology for extreme-density workloads, though PAC will remain essential for supporting the broader mix of equipment in any real-world data center.

8. Selecting the Right PAC System

Choosing a PAC system begins with an accurate IT load assessment—current draw plus a realistic growth projection over the facility’s intended lifespan. Key selection criteria include:

Cooling architecture: Room-level CRAC/CRAH for uniform density; in-row or rear-door for high-density zones. Most mature data centers use a hybrid approach.

Redundancy tier: Align with the facility’s Uptime Institute tier target. Tier III and IV facilities require N+1 or 2N cooling redundancy, meaning additional PAC capacity must be available and independently powered.

Free cooling compatibility: In moderate climates, economizer modes—either air-side (direct outside air) or water-side (cooling towers)—can eliminate mechanical refrigeration for hundreds of hours per year, dramatically cutting energy costs. Ensure selected PAC or CRAH units support economizer integration.

Footprint and serviceability: In colocation environments, floor space is revenue. Compact, high-capacity units with front-access service panels maximize density while simplifying maintenance without requiring full equipment shutdowns.

9. PAC Trends: Energy Efficiency and AI Integration

The PAC industry is undergoing rapid innovation driven by sustainability mandates and the extraordinary cooling demands of generative AI infrastructure. Three trends stand out:

AI-driven predictive cooling: Machine learning models trained on sensor data are being embedded into PAC control systems and DCIM platforms. Rather than reacting to temperature changes, these systems anticipate load shifts based on IT scheduling data and pre-condition the environment, reducing energy consumption and improving thermal stability.

Variable refrigerant flow (VRF) and inverter-driven compressors: Modern CRAC units increasingly use inverter-driven compressors that modulate capacity continuously rather than cycling on and off. This eliminates the temperature overshoot inherent in on/off control and can reduce compressor energy consumption by 20–40% under partial load conditions.

Integration with التبريد السائل: As GPU clusters demanding 50–200 kW per rack become mainstream, PAC systems are evolving into hybrid platforms that manage both air and liquid circuits. Rear-door heat exchangers fed by precision-controlled chilled water loops allow operators to handle extreme density while preserving their air-cooling investment for the rest of the data hall.

According to industry analysis, the global precision air conditioning market is expected to grow significantly through 2030, fueled by hyperscale data center expansion, edge computing rollouts, and the thermal demands of AI infrastructure.

10. Frequently Asked Questions

What is the difference between a CRAC and a CRAH unit?

A CRAC (Computer Room Air Conditioner) includes its own self-contained compressor and refrigerant circuit, making it independent of a central chiller plant. A CRAH (Computer Room Air Handler) uses chilled water supplied by an external chiller. CRAHs are generally more energy-efficient at large scale; CRACs offer simpler deployment for smaller sites.

How much cooling capacity do I need per server rack?

Standard enterprise racks typically draw 3–8 kW. High-density GPU racks for AI workloads can exceed 30–100 kW. Always engineer for peak load plus a minimum 25% redundancy buffer. Consult server vendor thermal specifications and use computational fluid dynamics (CFD) modeling for high-density deployments.

Can PAC systems support free cooling?

Yes. Modern CRAH units are designed to work with water-side economizers (cooling towers) that can provide “free” chilled water when outdoor temperatures drop below a threshold—typically around 10°C (50°F) for many climates. Air-side economizers are also used but require careful filtration to prevent particulate contamination of IT equipment.

How often should PAC units be serviced?

Most manufacturers recommend quarterly preventive maintenance including filter inspection and replacement, coil cleaning, refrigerant pressure checks, belt and bearing inspection, and control calibration. Annual comprehensive service by a certified technician is also standard practice to maintain warranty coverage and efficiency.

Is PAC still relevant with liquid cooling becoming popular?

Absolutely. Liquid cooling addresses the highest-density racks, but the vast majority of equipment in any data center—networking, storage, management servers—still relies on air cooling. PAC will remain essential for decades, increasingly as part of a hybrid infrastructure that combines air and liquid cooling zones within the same facility.

PAC in data center is a core technology for ensuring thermal stability, energy efficiency, and long-term reliability of IT infrastructure. With increasing demand for scalable and high-density deployments, precision air conditioning is no longer optional—it is essential. For organizations seeking advanced cooling solutions for modular, edge, or containerized environments, حصلت على القوة provides high-efficiency PAC systems designed for global data center applications.